The following is an assignment from my LIS degree. The task was to write up an abstract and analysis of a particular paper (assigned by the professor).

Abstract and Analysis: The Role of Classification in Information Retrieval on the Internet: Some Aspects of Browsing Lists in Search Engines by Marthinus S. van der Walt

Abstract

In library science, the two main approaches to information retrieval are alphabetical and classified. Would the Internet benefit from a classical library approach to information retrieval?

In search engines, browsing lists include sites that have been selected and reviewed by hand, which often provides more relevant results than keyword searching. The current paper evaluates the classificatory structure of browsing lists and recommends improvements.

Fourteen (14) search engines were included in the study based on their categorized browsing lists and their general coverage of the Internet. Evaluation of the lists was limited to a review of the main classes and an examination of the specificity of the classification schemes.

Bliss’s ‘educational and scientific consensus’ seems to exist for the following fifteen (15) concepts which were found to be present in the main classes of over 50% of the sites: business, education, computing, sports, arts, health, science, entertainment, news, government, travel, internet, lifestyle, recreation, reference. However, a further fifty-five (55) main classes occurred in less than half of the top pages.

Bliss’s ‘collocation of related classes’ has not been observed, as classes are arranged either alphabetically or arbitrarily. Sublevels, in particular, would benefit from a logical structure where, for example, Children’s Health, Men’s Health, and Women’s Health would appear together rather than separately in an alphabetical list of subclasses.

Specificity was measured by noting both the total number of hierarchical levels and the number of classes in the first two levels. Only half of the search engines were found to be adequately specific.

Overall, the following problems were found with the classification schemes used in browsing lists: main classes are not properly weeded for specialized terms that should be placed lower in the hierarchy; related classes are not logically ordered; and an appropriate level of specificity is not achieved. The author concludes that search engines could benefit from the application of the established principles of library classification.

[315 words]

Analysis

Van der Walt’s proposal that we apply the principles of library science to classifying the web is well taken. Bliss’s principles of “educational and scientific consensus” and “collocation of related classes” are certainly useful principles in library science, and can be appropriately applied to web directories. However, as directory-based search engines become less prominent, the argument loses its punch. Directories are rarely used by themselves anymore, possibly due, in part, to the failings that van der Walt suggests.

This article was written in 1997, which is ancient history in internet years. Of the fourteen (14) search engines that van der Walt mentioned, nine (9) are still in operation: Argus Clearinghouse, Excite, Galaxy, LookSmart, Lycos, NetGuide, Webcrawler, What-u-seek, Yahoo. The remaining five (5) are no longer in operation, or they have merged with other sites: Infoseek (became go.com), Nerd World, G.O.D., Magellan (became Webcrawler), Point (Lycos Top 5% – became just Lycos). Of the nine in operation, only three are considered major search engines today (Looksmart, Lycos, Yahoo). Of particular note is the absence of Google (launched in 1998), one of the most popular search engine in existence today, and its directory version, “Open Directory” (also started in 1998).

Since 1997, a great deal of progress has been made in search engine technology. There are now three major kinds of search engines: crawler-based, human-powered directories, and hybrid. The majority of search engines are hybrid, but with a strong emphasis on crawling.

The advent of crawler-based engines has undermined van der Walt’s proposal for cleaning up directory-based search engines. Hybrid search engines are able to retrieve information surprisingly well (when the user knows how to search effectively). One source claims that internet users find what they want when using search engines over 80% of the time. This high estimate is probably due to the fact that only a small number of people are using the internet for serious research. Searches are more likely to be for something that the users “would like to know” rather than something they “need to know”. The percentage would likely be lower if researchers were polled for their search success rates. Regardless, the need to dedicate resources to manually cataloging the web has become less of an issue.

In addition, the size of the web has become a prohibitive factor to human-powered directories. In 1998, the web had approximately 3 million unique sites. It now has almost 9 million sites. The Library of Congress houses 18 million books (out of a collection of 120 million items) and has a staff of thousands to keep up with the constant flow of new titles. Is there any organization in the world that will pay for the web to be hand-classified with a library-approved scheme? Unless there is profit in it, major companies won’t be interested. Unless there is major public demand, governments won’t be interested. However, even with a large, dedicated, highly-trained staff, directory-based search engines could not keep up with crawling. Van der Walt’s suggestions are moot in this context.

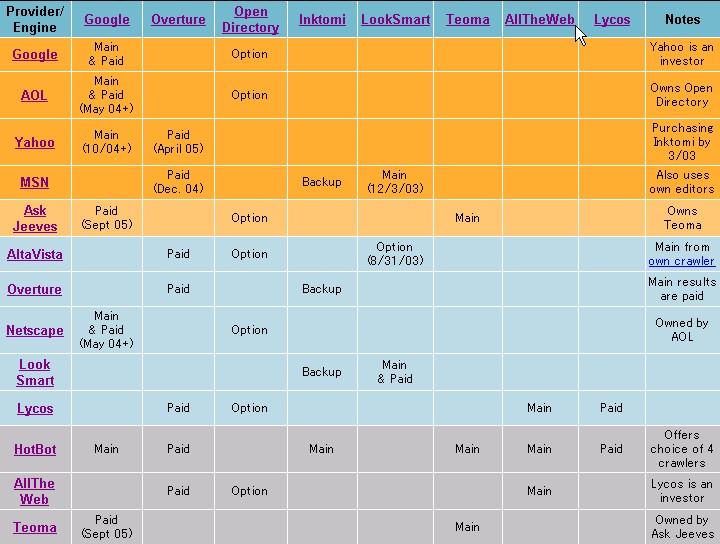

GG=Google, FAST=FAST, AV=AltaVista, INK=Inktomi, NL=Northern Light.

From http://searchenginewatch.com/reports/sizes.html

However, one human-powered directory project seems to have taken van der Walt’s ideas as far as they can go: the Open Directory Project.

The three main directory-type engines are (in order of size) Open Directory Project, LookSmart, and Yahoo. They range from having 10 to 16 main classes, run to about 7 or 8 levels, and are organized in a variety of ways.

The Open Directory Project is a co-operative directory of the web, based on the work of over 53,000 volunteer editors and the submissions of website creators. The ODP scheme has over 460,000 categories and it has explicit instructions on how to submit sites. Website creators choose the location that is most appropriate for their site, and their submissions are reviewed by editors who have volunteered to oversee that node. This co-operative effort has resulted in the placement of over 3.8 million sites in this directory (as of December 2002). Classes are listed alphabetically, organized by topic and region, and cross-referencing is used effectively.

Open Directory Project

16 main classes, at least 8 levels deep, classes organized alphabetically

Looksmart

10 main classes, at least 7 levels deep, classes organized randomly

Yahoo

14 main classes, at least 8 levels deep, classes organized randomly, alphabetically, by popularity

From http://searchenginewatch.com/reports/directories.html.

Looksmart and Yahoo, on the other hand, rely on a paid staff of approximately 100 to 200 people to review sites and place them in the appropriate categories. This is not to say that the smaller number of editors makes for an inferior directory, but it has to be admitted that the currency of the directory is compromised with a smaller staff, as it would be difficult to stay on top of the latest submissions with fewer people to monitor the web. The Open Directory Project alone receives 250 submissions per hour.

The principles of library science are indeed valuable tools in pondering the chaos that is the internet today. However, many basic library principles surfaced in a world constrained by physical limits of space and human limits of time. The internet is not limited in either way. So, while the basic principles of maintaining order amongst the chaos need to be kept in mind, we need to move on from those principles to create a new, dynamic, unlimited theoretical framework for information retrieval on the internet.

References Library of Congress (www.loc.gov/about), LookSmart (www.looksmart.com), Online Computer Library Center (www.oclc.org), Open Directory Project (www.dmoz.org), Search Engine Watch (www.searchenginewatch.com), Yahoo (www.yahoo.com)

Also of interest: Who Powers Whom?

Search engines with more than 5 million search hours per month come first and are shaded dark orange. Partnerships with these search engines thus count for more importance than with others. Search engines with 2 million or more search hours per month are shaded light orange, then those with 300,000 or more search hours are shaded light blue. Those shaded in gray have no significant search hours reported, but they are shown because of the name recognition they may have among serious searchers.